Failed to parse the model, please check the encoding key to make sure it’s correct UffParser: Validator error: FirstDimTile_0: Unsupported operation _BatchTilePlugin_TRT

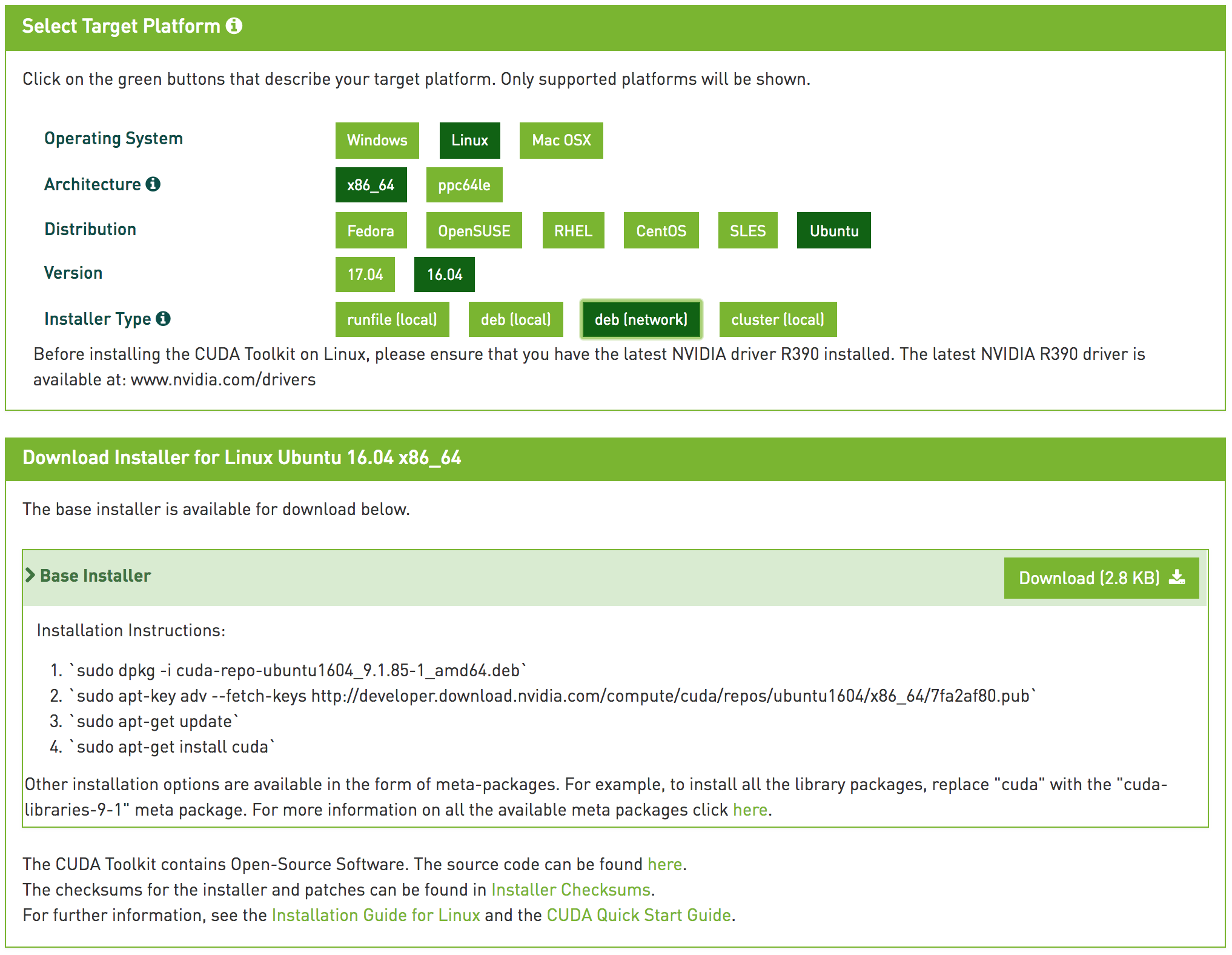

Then i try to convert etlt to trt engine like tlt-converter -k Y2dtMXZ0M3E2dHRzcGtqZTQ4a25KMWRhaGM6ZWQ4N2U5MjktMDhhMS00ZVUxLTk0MTItZWQ5MjJlYTRjYmI0 If meet with out-of-memory issue, please increase the workspace size accordingly. w maximum workspace size of TensorRT engine (default 1<<30). Note that it’ll always allow GPU fallback). u Use DLA core N for layers that support DLA(default = -1, which means no DLA core will be utilized for inference. t TensorRT data type – fp32, fp16, int8 (default fp32). s TensorRT strict_type_constraints flag for INT8 mode(default false). This argument is only useful in dynamic shape case. Can be specified multiple times if there are multiple input tensors for the model. p comma separated list of optimization profile shapes in the format, where each shape has the format: xxx. o comma separated list of output node names (default none). If meet with out-of-memory issue, please decrease the batch size accordingly. m maximum TensorRT engine batch size (default 16). i input dimension ordering – nchw, nhwc, nc (default nchw). e file the engine is saved to (default saved.engine).

c calibration cache file (default cal.bin). d comma separated list of input dimensions(not required for TLT 3.0 new models). Input_file Input file (.etlt exported model). Generate TensorRT engine from exported model after updation Now CUDA10.2, and cudnn8.0 and TensorRT7.2 everything installed. Thank You updates was there.That’s the problem.

0 Comments

Leave a Reply. |

RSS Feed

RSS Feed